claude-code-nix-sandbox

Warning: This project is under active development and should be considered unstable. Features may be incomplete, broken, or change without notice. If you choose to run it, you do so at your own risk. There are no guarantees of correctness, security, or fitness for any particular purpose.

Launch sandboxed Claude Code sessions with Chromium using Nix.

Claude Code (from sadjow/claude-code-nix) runs inside an isolated sandbox with filesystem isolation, display forwarding, and a Chromium browser. Three backends are available with increasing isolation strength:

| Backend | Isolation | Requires |

|---|---|---|

| Bubblewrap | User namespaces, shared kernel | Unprivileged |

| systemd-nspawn | Full namespace isolation | Root (sudo) |

| QEMU VM | Separate kernel, hardware virtualization | KVM recommended |

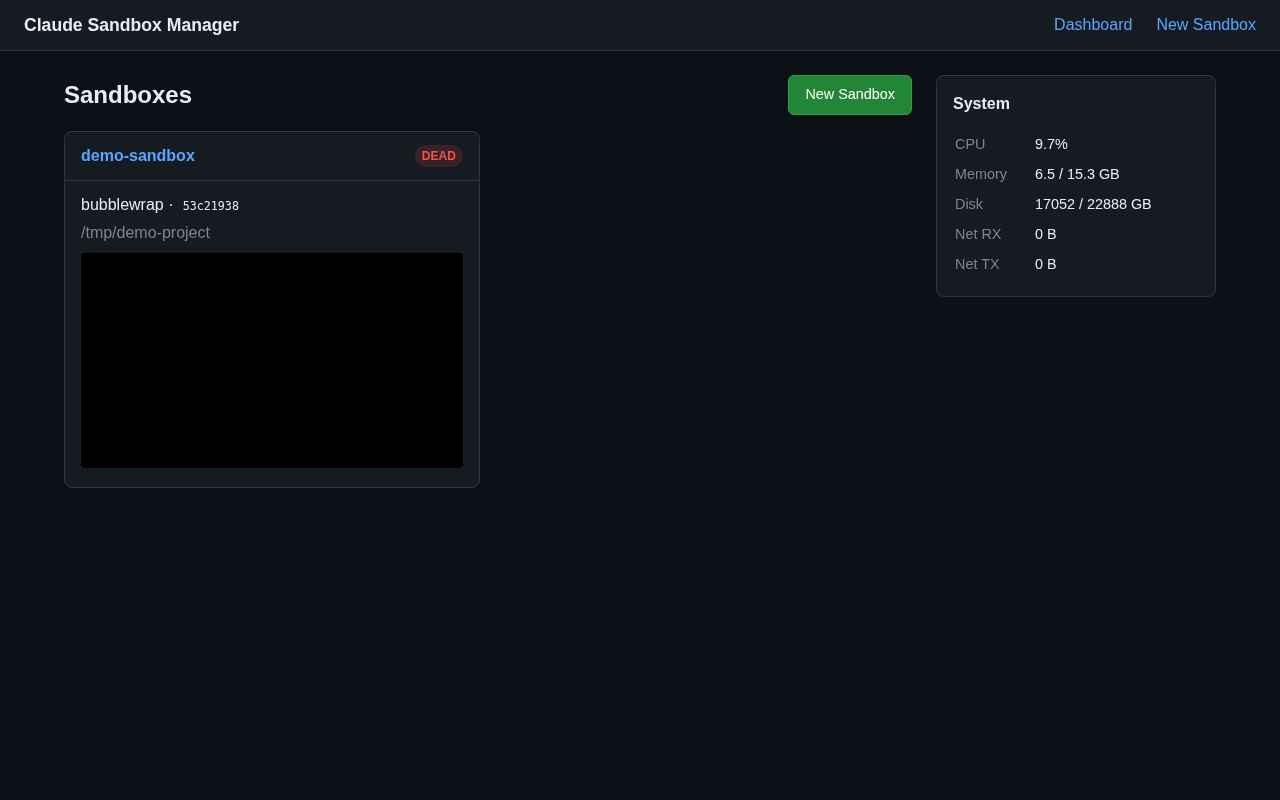

A remote sandbox manager is also provided: a Rust/Axum daemon with a web dashboard and CLI for managing sandboxes on a server over SSH.

Web Dashboard

Features

- Pure Nix — no shell/Python wrappers; all orchestration in Nix

- Chromium from nixpkgs — always

pkgs.chromiuminside the sandbox - Git/SSH forwarding — push/pull works inside all backends

- Nix commands —

NIX_REMOTE=daemonforwarding sonix buildworks inside sandboxes - Display forwarding — X11, Wayland, GPU acceleration (bubblewrap/container) or QEMU window (VM)

- Audio forwarding — PipeWire/PulseAudio (bubblewrap/container)

- D-Bus session bus proxy — filtered via

xdg-dbus-proxy(keyring/Secret Service only, blocks Chromium singleton collisions) - Remote management — web dashboard with live screenshots, real-time log streaming via WebSocket, metrics, and a CLI over SSH

Quick Start

# Install both claude-sandbox and claude-code (bundled)

nix profile install github:jhhuh/claude-code-nix-sandbox

# Bubblewrap (unprivileged)

nix run github:jhhuh/claude-code-nix-sandbox#sandbox -- /path/to/project

# systemd-nspawn container (requires sudo)

nix build github:jhhuh/claude-code-nix-sandbox#container

sudo ./result/bin/claude-sandbox-container /path/to/project

# QEMU VM (strongest isolation)

nix build github:jhhuh/claude-code-nix-sandbox#vm

./result/bin/claude-sandbox-vm /path/to/project

Requires ANTHROPIC_API_KEY in your environment, or an existing ~/.claude login (auto-mounted).

See Getting Started for full details.

Getting Started

Requirements

- NixOS or Nix with flakes enabled on Linux

- User namespaces for the bubblewrap backend (enabled by default on most distros)

- X11 or Wayland display server for bubblewrap/container backends

- KVM recommended for the VM backend (

/dev/kvm) ANTHROPIC_API_KEYin your environment, or an existing~/.claudelogin (auto-mounted)

Quick Start

Install (both sandboxed and un-sandboxed)

The default package bundles claude-sandbox (bubblewrap) and claude (un-sandboxed) together:

# Install both binaries

nix profile install github:jhhuh/claude-code-nix-sandbox

# Update to latest

nix profile upgrade claude-code-nix-sandbox --refresh

Bubblewrap (unprivileged)

# Run Claude Code in a sandbox

nix run github:jhhuh/claude-code-nix-sandbox#sandbox -- /path/to/project

# Run inside tmux (needed for agent teams)

nix run github:jhhuh/claude-code-nix-sandbox#sandbox -- --tmux /path/to/project

# Drop into a shell inside the sandbox

nix run github:jhhuh/claude-code-nix-sandbox#sandbox -- --shell /path/to/project

systemd-nspawn container (requires sudo)

nix build github:jhhuh/claude-code-nix-sandbox#container

sudo ./result/bin/claude-sandbox-container /path/to/project

# Shell mode

sudo ./result/bin/claude-sandbox-container --shell /path/to/project

QEMU VM (strongest isolation)

Claude runs on the serial console in your terminal. Chromium renders in the QEMU display window.

nix build github:jhhuh/claude-code-nix-sandbox#vm

./result/bin/claude-sandbox-vm /path/to/project

# Shell mode

./result/bin/claude-sandbox-vm --shell /path/to/project

What gets forwarded

All three backends automatically forward these from your host:

~/.claude— auth persistence (read-write)~/.gitconfig,~/.config/git/,~/.ssh/— git/SSH config (read-only)SSH_AUTH_SOCK— SSH agent forwardingANTHROPIC_API_KEY— API key (if set)/nix/store— Nix store (read-only) + daemon socket

Available packages

| Package | Binary | Description |

|---|---|---|

default | claude-sandbox, claude | Bubblewrap sandbox + un-sandboxed claude-code (bundled) |

sandbox | claude-sandbox | Bubblewrap sandbox only |

no-network | claude-sandbox | Bubblewrap without network |

container | claude-sandbox-container | systemd-nspawn with network |

container-no-network | claude-sandbox-container | systemd-nspawn without network |

vm | claude-sandbox-vm | QEMU VM with NAT |

vm-no-network | claude-sandbox-vm | QEMU VM without network |

manager | claude-sandbox-manager | Remote sandbox manager daemon |

cli | claude-remote | CLI for remote management |

Build any package with:

nix build github:jhhuh/claude-code-nix-sandbox#<package>

Sandbox Backends

All three backends share a common pattern: they are callPackage-able Nix functions that produce writeShellApplication derivations. Each accepts network (bool) and backend-specific customization options.

Comparison

| Resource | Bubblewrap | Container | VM |

|---|---|---|---|

| Project directory | Read-write (bind-mount) | Read-write (bind-mount) | Read-write (9p) |

~/.claude | Read-write (bind-mount) | Read-write (bind-mount) | Read-write (9p) |

~/.gitconfig, ~/.ssh | Read-only (bind-mount) | Read-only (bind-mount) | Read-only (9p) |

/nix/store | Read-only | Read-only | Shared from host |

/home | Isolated (tmpfs) | Isolated | Separate filesystem |

| Network | Shared by default | Shared by default | NAT by default |

| Display | Host X11/Wayland | Host X11/Wayland | QEMU window (Xorg) |

| Audio | PipeWire/PulseAudio | PipeWire/PulseAudio | Isolated |

| GPU (DRI) | Forwarded | Forwarded | Virtio VGA |

| D-Bus | Forwarded | Forwarded | Isolated |

| SSH agent | Forwarded | Forwarded | Isolated |

| Nix commands | Via daemon | Via daemon | Local store |

| GitHub CLI config | Forwarded | Forwarded | Forwarded (9p) |

| Locale | Forwarded | Forwarded | Forwarded (meta) |

| Kernel | Shared | Shared | Separate |

Choosing a backend

- Bubblewrap — fastest startup, least overhead, good for day-to-day use. Shares the host kernel and network by default. Requires user namespace support.

- Container — stronger isolation with separate PID/mount/IPC namespaces. Requires root. Good when you need namespace-level isolation without the overhead of a VM.

- VM — strongest isolation with a separate kernel. Best for untrusted workloads. Requires KVM for reasonable performance. Chromium renders in the QEMU window rather than forwarding to the host display.

Common flags

All backends accept:

[--shell] [--gh-token] <project-dir> [claude args...]

--shell— drop into bash instead of launching Claude Code--gh-token— forwardGH_TOKEN/GITHUB_TOKENenv vars into the sandbox<project-dir>— the directory to mount read-write inside the sandbox- Additional arguments after the project directory are passed to

claude

Bubblewrap Backend

The default backend. Uses bubblewrap (bwrap) to create a lightweight sandbox using Linux user namespaces. No root required.

Usage

# Build

nix build github:jhhuh/claude-code-nix-sandbox

# Run

./result/bin/claude-sandbox /path/to/project

./result/bin/claude-sandbox --shell /path/to/project

# Run inside tmux (needed for agent teams)

./result/bin/claude-sandbox --tmux /path/to/project

# Without network

nix build github:jhhuh/claude-code-nix-sandbox#no-network

./result/bin/claude-sandbox /path/to/project

How it works

The sandbox script imports nix/sandbox-spec.nix for the canonical package list and builds a symlinkJoin of spec.packages plus chromiumSandbox and any extraPackages into a single PATH. Host /etc paths are also driven by the spec. It then calls bwrap with:

- Filesystem:

/nix/storeread-only, project directory read-write,~/.clauderead-write,/homeas tmpfs - Display: X11 socket + Xauthority, Wayland socket forwarded

- D-Bus: system bus and session bus forwarded (Chromium isolated from session bus via

env -u DBUS_SESSION_BUS_ADDRESSin wrapper to prevent singleton collisions) - GPU:

/dev/driand/run/opengl-driverforwarded for hardware acceleration - Audio: PipeWire and PulseAudio sockets forwarded

- Network: shared with host by default,

--unshare-netwhennetwork = false - Nix: daemon socket forwarded with

NIX_REMOTE=daemon

The sandbox home is /home/sandbox. The process runs as your user (no UID mapping).

tmux mode (--tmux)

The --tmux flag starts claude-code inside a tmux session, required for Claude Code’s experimental agent teams feature. The tmux state is stored per-project in <project-dir>/.tmux/:

tmux.conf— minimal config, created on first run, editable and persistent across restartssocket— tmux server socket (runtime, per-project to avoid collisions)

The session is named sandbox:<project-name> with an orange status bar to visually distinguish it from host tmux sessions.

Nix parameters

| Parameter | Type | Default | Description |

|---|---|---|---|

network | bool | true | Allow network access (false adds --unshare-net) |

extraPackages | list of packages | [] | Additional packages on PATH inside the sandbox |

Customization example

pkgs.callPackage ./nix/backends/bubblewrap.nix {

extraPackages = [ pkgs.python3 pkgs.nodejs ];

network = false;

}

Requirements

- Linux with user namespace support (

security.unprivilegedUsernsClone = trueon NixOS) - X11 or Wayland display server

systemd-nspawn Container Backend

Uses systemd-nspawn for container-level isolation with separate PID, mount, and IPC namespaces. Requires root.

Usage

# Build

nix build github:jhhuh/claude-code-nix-sandbox#container

# Run (requires sudo)

sudo ./result/bin/claude-sandbox-container /path/to/project

sudo ./result/bin/claude-sandbox-container --shell /path/to/project

# Without network

nix build github:jhhuh/claude-code-nix-sandbox#container-no-network

sudo ./result/bin/claude-sandbox-container /path/to/project

How it works

The backend imports nix/sandbox-spec.nix for the canonical package list and evaluates a NixOS configuration (nixosSystem) with spec.packages in environment.systemPackages to produce a system closure (toplevel). Host /etc paths are also driven by the spec. At runtime it:

- Creates an ephemeral container root in

/tmp/claude-nspawn.XXXXXX - Creates stub files (

os-release,machine-id) and passwd/group entries - Detects the real user’s UID/GID via

SUDO_USERfor file ownership - Launches

systemd-nspawn --ephemeralwith bind-mounts for the project, display, audio, GPU, etc. - Runs as PID2 (

--as-pid2), then usessetprivto drop from root to the real user’s UID/GID

The project directory is mounted at /project inside the container.

Why setpriv instead of su/runuser

The container uses setpriv --reuid --regid --init-groups to drop privileges because su and runuser require PAM, which isn’t available in the minimal container environment. See artifacts/skills/nspawn-privilege-drop-without-pam.md for details.

UID/GID mapping

The real user’s UID and GID are detected from SUDO_USER/SUDO_HOME environment variables (set by sudo). A sandbox user is created inside the container with matching UID/GID so that files created in the project directory have correct ownership on the host. See artifacts/skills/sudo-aware-uid-detection-for-containers.md.

Nix parameters

| Parameter | Type | Default | Description |

|---|---|---|---|

network | bool | true | Allow network access (false adds --private-network) |

extraModules | list of NixOS modules | [] | Extra NixOS config for the container |

nixos | function | (required) | NixOS evaluator, typically args: nixpkgs.lib.nixosSystem { ... } |

Customization example

pkgs.callPackage ./nix/backends/container.nix {

nixos = args: nixpkgs.lib.nixosSystem {

system = "x86_64-linux";

modules = args.imports;

};

extraModules = [{

environment.systemPackages = with pkgs; [ python3 nodejs ];

}];

}

Forwarded resources

- X11 display socket and Xauthority (copied into container root)

- Wayland socket

- D-Bus system bus and session bus (Chromium isolated from session bus via wrapper)

- GPU (

/dev/dri,/dev/shm,/run/opengl-driver) - PipeWire and PulseAudio sockets

- SSH agent (remapped to

/run/user/<uid>/ssh-agent.sock) - Git config and SSH keys (read-only)

~/.claudeauth directory (read-write)- Nix store, database, and daemon socket

- Host DNS, TLS certificates, fonts, timezone

- Locale (

LANG,LC_ALL)

QEMU VM Backend

The strongest isolation backend. Runs a full NixOS virtual machine with a separate kernel. Claude Code runs on the serial console (in your terminal), while Chromium renders in the QEMU display window (Xorg + Openbox).

Usage

# Build

nix build github:jhhuh/claude-code-nix-sandbox#vm

# Run

./result/bin/claude-sandbox-vm /path/to/project

./result/bin/claude-sandbox-vm --shell /path/to/project

# Without network

nix build github:jhhuh/claude-code-nix-sandbox#vm-no-network

./result/bin/claude-sandbox-vm /path/to/project

How it works

The backend imports nix/sandbox-spec.nix for the canonical package list and Chrome extension IDs, then evaluates a NixOS VM configuration using the qemu-vm.nix module. The VM is configured with:

- 4 GB RAM, 4 cores (defaults from

virtualisationmodule) - Serial console on stdio for Claude Code interaction

- QEMU GTK window running Xorg + Openbox for Chromium display

- 9p filesystem shares for project directory, auth, git config, SSH keys, and metadata

Console setup

The VM has two consoles: tty0 (QEMU window) and ttyS0 (serial/stdio). The serial console is listed last in virtualisation.qemu.consoles so Linux makes it /dev/console. Getty auto-logs in the sandbox user on ttyS0.

A tty guard in interactiveShellInit ensures the entrypoint (Claude Code or bash) only runs on ttyS0, not on the graphical tty0. See artifacts/skills/nixos-qemu-vm-serial-console-setup.md.

9p filesystem shares

| Mount point | Tag | Mode | Description |

|---|---|---|---|

/project | project_share | Read-write | Project directory |

/home/sandbox/.claude | claude_auth | Read-write, nofail | Auth persistence |

/home/sandbox/.gitconfig | git_config | Read-only, nofail | Git config |

/home/sandbox/.config/git | git_config_dir | Read-only, nofail | Git config directory |

/home/sandbox/.config/gh | gh_config_dir | Read-only, nofail | GitHub CLI config |

/home/sandbox/.ssh | ssh_dir | Read-only, nofail | SSH keys |

/mnt/meta | claude_meta | Read-only | Entrypoint and API key |

Shares use msize=104857600 (100 MB) for the project directory to improve I/O throughput. The nofail option allows the VM to boot even if the host directory doesn’t exist.

Metadata passing

The entrypoint command, API key, GitHub token, and locale settings are written to a temporary directory on the host and shared via 9p as /mnt/meta. The VM reads these files during shell init:

/mnt/meta/entrypoint— command to run (claude or bash)/mnt/meta/apikey— Anthropic API key/mnt/meta/host_home— host user’s home path (for path reconstruction)/mnt/meta/host_project— host project path (for bind-mount)/mnt/meta/claude.json— Claude config file/mnt/meta/gh_token— GitHub token (when--gh-tokenis used)/mnt/meta/lang— LANG locale setting/mnt/meta/lc_all— LC_ALL locale setting

Nix parameters

| Parameter | Type | Default | Description |

|---|---|---|---|

network | bool | true | Enable DHCP networking (false empties vlans) |

extraModules | list of NixOS modules | [] | Extra NixOS config for the VM |

nixos | function | (required) | NixOS evaluator |

Customization example

pkgs.callPackage ./nix/backends/vm.nix {

nixos = args: nixpkgs.lib.nixosSystem {

system = "x86_64-linux";

modules = args.imports;

};

extraModules = [{

virtualisation.memorySize = 8192;

virtualisation.cores = 8;

environment.systemPackages = with pkgs; [ python3 ];

}];

}

Requirements

- KVM recommended (

/dev/kvm) for reasonable performance - Works without KVM but is significantly slower (software emulation)

Remote Manager

Run sandboxes on a remote server and manage them from your laptop via a web dashboard or CLI. The manager is a Rust/Axum daemon that orchestrates sandbox lifecycles, captures live screenshots, and collects metrics.

Architecture

laptop remote server

│ │

│ claude-remote create ... │ manager daemon (127.0.0.1:3000)

│ ─────────────────────────────────>│ ├── starts Xvfb display

│ │ ├── starts tmux session

│ claude-remote attach <id> │ ├── runs sandbox backend

│ ─────────────────────────────────>│ ├── captures screenshots

│ │ └── collects metrics

│ claude-remote ui │

│ open http://localhost:3000 │ web dashboard (htmx, live refresh)

│ ─────────────────────────────────>│

All communication happens over SSH — the CLI runs ssh $HOST curl ... to talk to the manager’s localhost-only HTTP API.

Running the manager

# Build

nix build github:jhhuh/claude-code-nix-sandbox#manager

# Run

MANAGER_LISTEN=127.0.0.1:3000 ./result/bin/claude-sandbox-manager

Or deploy as a NixOS systemd service — see Manager Module.

Environment variables

| Variable | Default | Description |

|---|---|---|

MANAGER_LISTEN | 127.0.0.1:3000 | Listen address and port |

MANAGER_STATE_DIR | . | Directory for state.json persistence |

MANAGER_STATIC_DIR | (set by Nix wrapper) | Path to static web assets |

Components

The manager daemon runs three concurrent tasks:

- HTTP server — Axum router serving pages, JSON API, htmx fragments, and static files

- Liveness monitor — checks tmux sessions every 5 seconds, marks dead sandboxes

- Screenshot loop — captures Xvfb displays (ImageMagick

import) or QEMU QMP screendumps every 2 seconds

State persistence

Sandbox state is persisted as JSON in $MANAGER_STATE_DIR/state.json. On startup, the manager loads existing state and reconciles PIDs — any sandbox whose tmux session has disappeared is marked as dead.

Runtime dependencies

The Nix package wraps the manager binary with these tools on PATH:

- ImageMagick — Xvfb screenshot capture (

import) - socat — QEMU QMP communication

- tmux — sandbox session management

- Xvfb (xorgserver) — virtual framebuffer for bubblewrap/container backends

- Sandbox backends — configured via

sandboxPackagesparameter

CLI (claude-remote)

claude-remote is a local CLI for managing sandboxes on a remote server. All commands run over SSH — no direct HTTP from your laptop.

Installation

Available in the devShell or as a standalone package:

# Via devShell

nix develop github:jhhuh/claude-code-nix-sandbox

claude-remote help

# Standalone

nix build github:jhhuh/claude-code-nix-sandbox#cli

./result/bin/claude-remote help

Configuration

Settings are resolved in order: environment variable > config file > default.

Config file

Location: ${XDG_CONFIG_HOME:-~/.config}/claude-remote/config

# ~/.config/claude-remote/config

host = myserver

port = 3000

ssh_opts = -i ~/.ssh/mykey

Lines starting with # are comments. Blank lines are ignored.

Environment variables

| Variable | Config key | Default | Description |

|---|---|---|---|

CLAUDE_REMOTE_HOST | host | — | Remote server hostname (required) |

CLAUDE_REMOTE_PORT | port | 3000 | Manager port on the remote |

CLAUDE_REMOTE_SSH_OPTS | ssh_opts | — | Extra SSH options (e.g. -i ~/.ssh/key) |

Environment variables always override config file values.

Commands

create

Create a new sandbox on the remote server.

claude-remote create <name> <backend> <project-dir> [--no-network] [--sync]

<backend>—bubblewrap,container, orvm--no-network— disable network access--sync— rsync the local project directory to the remote before creating

list

List all sandboxes (alias: ls).

claude-remote list

Output shows id (first 8 chars), name, backend, status, and project directory.

attach

Attach to a sandbox’s tmux session over SSH.

claude-remote attach <id-prefix>

The id-prefix can be any unique prefix of the sandbox UUID.

stop

Stop a running sandbox.

claude-remote stop <id-prefix>

delete

Delete a sandbox (alias: rm).

claude-remote delete <id-prefix>

metrics

Show system metrics, and optionally sandbox-specific Claude session metrics.

claude-remote metrics # system only

claude-remote metrics <id-prefix> # system + sandbox Claude metrics

sync

One-shot rsync from local to remote.

claude-remote sync <local-dir> [remote-dir]

If remote-dir is omitted, it defaults to the same path as local-dir. Excludes .git/ and respects .gitignore.

watch

Continuous bidirectional sync using fswatch + rsync.

claude-remote watch <local-dir> [remote-dir]

- Performs an initial local-to-remote sync

- Watches for local file changes and syncs to remote (debounced with 100ms window)

- Polls remote-to-local every 2 seconds in the background

- Excludes

.git/and respects.gitignore - Ctrl+C to stop

ui

Forward the web dashboard via SSH tunnel.

claude-remote ui

# Then open http://localhost:3000

REST API

The manager exposes a JSON API alongside the web dashboard. All endpoints listen on the configured MANAGER_LISTEN address (default 127.0.0.1:3000).

Endpoints

List sandboxes

GET /api/sandboxes

Returns a JSON array of all sandboxes, sorted by creation time (newest first).

curl localhost:3000/api/sandboxes

Get sandbox

GET /api/sandboxes/<id>

Returns a single sandbox by full UUID.

curl localhost:3000/api/sandboxes/<id>

Create sandbox

POST /api/sandboxes

Content-Type: application/json

Request body:

{

"name": "my-project",

"backend": "bubblewrap",

"project_dir": "/home/user/project",

"network": true

}

backend—"bubblewrap","container", or"vm"network— optional, defaults totrue

Returns 201 Created with the sandbox JSON on success.

curl -X POST localhost:3000/api/sandboxes \

-H 'Content-Type: application/json' \

-d '{"name":"test","backend":"bubblewrap","project_dir":"/tmp/test","network":true}'

Stop sandbox

POST /api/sandboxes/<id>/stop

Returns 204 No Content on success.

curl -X POST localhost:3000/api/sandboxes/<id>/stop

Delete sandbox

DELETE /api/sandboxes/<id>

Returns 204 No Content on success.

curl -X DELETE localhost:3000/api/sandboxes/<id>

Get screenshot

GET /api/sandboxes/<id>/screenshot

Returns the latest screenshot as image/png. Returns 404 if no screenshot is available.

curl localhost:3000/api/sandboxes/<id>/screenshot -o screenshot.png

Get sandbox metrics

GET /api/sandboxes/<id>/metrics

Returns Claude session metrics parsed from the sandbox’s project directory (tokens used, tool calls, message count, etc.).

curl localhost:3000/api/sandboxes/<id>/metrics

Get system metrics

GET /api/metrics/system

Returns system-wide metrics: CPU usage, memory, disk, and load averages.

curl localhost:3000/api/metrics/system

Get logs

GET /api/sandboxes/<id>/logs

Returns the full log file (tmux pipe-pane output) as text/plain.

curl localhost:3000/api/sandboxes/<id>/logs

Stream logs (WebSocket)

GET /ws/sandboxes/<id>/logs

Upgrades to a WebSocket connection. Sends the last 1000 lines as initial backlog, then pushes new lines in real time as the sandbox produces output.

Sandbox object

{

"id": "a1b2c3d4-...",

"name": "my-project",

"backend": "bubblewrap",

"project_dir": "/home/user/project",

"status": "running",

"display_num": 50,

"tmux_session": "claude-a1b2c3d4",

"pid_xvfb": 12345,

"qemu_qmp_socket": null,

"network": true,

"created_at": "2025-01-15T10:30:00Z"

}

status—"running","stopped", or"dead"display_num— Xvfb display number (bubblewrap/container backends)qemu_qmp_socket— QMP socket path (VM backend)tmux_session— tmux session name for attaching

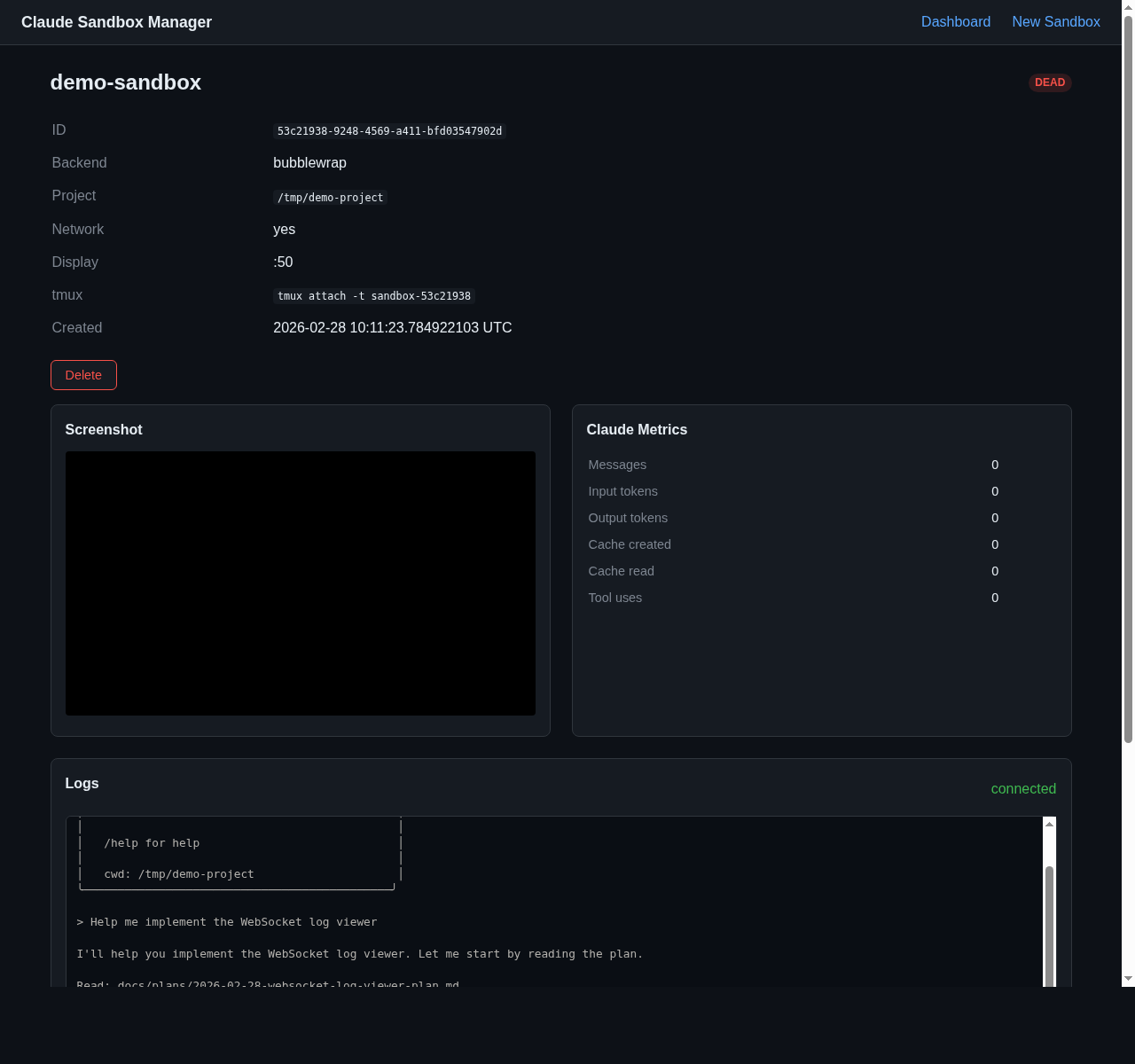

Web Dashboard

The manager includes a web dashboard for visual sandbox management. Access it via claude-remote ui (SSH tunnel) or directly if you can reach the manager’s listen address.

Features

- Sandbox list — all sandboxes with status badges, backend type, and creation time

- Live screenshots — captured every 2 seconds from Xvfb or QEMU QMP

- Sandbox detail — individual page with live screenshot feed, Claude session metrics, and real-time log viewer

- Real-time log streaming — WebSocket-powered terminal view of sandbox tmux output

- Create form — HTML form for creating new sandboxes

- System metrics — CPU, memory, disk usage

Sandbox Detail

Each sandbox detail page shows:

- Sandbox info — ID, backend, project directory, network status, display number, tmux session

- Live screenshot — auto-refreshing Xvfb or QEMU screendump

- Claude metrics — messages, input/output tokens, cache stats, tool uses (parsed from Claude’s JSONL session files)

- Log viewer — real-time streaming of the sandbox’s tmux output via WebSocket, with connection status indicator and auto-scroll

Technology

The dashboard is server-rendered HTML with htmx for auto-refreshing fragments. There is no JavaScript build step — htmx and CSS are vendored as static files, and HTML templates are compiled into the binary via askama.

Log streaming uses a WebSocket endpoint (/ws/sandboxes/<id>/logs) that tails the sandbox’s tmux pipe-pane log file and pushes new lines to connected clients in real time.

htmx fragments

The dashboard uses htmx polling to keep content fresh without full page reloads:

| Fragment endpoint | Description |

|---|---|

/fragments/sandbox-list | Sandbox list on the index page |

/fragments/system-metrics | System metrics display |

/fragments/sandboxes/<id>/claude-metrics | Claude session metrics for a sandbox |

/fragments/sandboxes/<id>/screenshot | Live screenshot <img> tag |

WebSocket endpoint

| Endpoint | Description |

|---|---|

/ws/sandboxes/<id>/logs | Real-time log stream (sends last 1000 lines as backlog, then new lines as they appear) |

REST endpoint

| Endpoint | Description |

|---|---|

/api/sandboxes/<id>/logs | Full log file as text/plain |

Pages

| URL | Description |

|---|---|

/ | Index — sandbox list + system metrics |

/new | Create sandbox form |

/sandboxes/<id> | Sandbox detail — screenshot + metrics + log viewer |

Sandbox NixOS Module

For NixOS users, a declarative module installs sandbox backends as system packages.

Usage

# flake.nix

{

inputs.claude-sandbox.url = "github:jhhuh/claude-code-nix-sandbox";

outputs = { nixpkgs, claude-sandbox, ... }: {

nixosConfigurations.myhost = nixpkgs.lib.nixosSystem {

modules = [

claude-sandbox.nixosModules.default

{

services.claude-sandbox = {

enable = true;

container.enable = true;

vm.enable = true;

};

}

];

};

};

}

Options

services.claude-sandbox.enable

Whether to install Claude Code sandbox wrappers.

- Type:

bool - Default:

false

services.claude-sandbox.network

Allow network access from sandboxes. Applies to all enabled backends.

- Type:

bool - Default:

true

services.claude-sandbox.bubblewrap.enable

Install the bubblewrap sandbox. Enabled by default when the module is active.

- Type:

bool - Default:

true

services.claude-sandbox.bubblewrap.extraPackages

Extra packages available inside the bubblewrap sandbox.

- Type:

list of package - Default:

[]

services.claude-sandbox.container.enable

Install the systemd-nspawn container sandbox.

- Type:

bool - Default:

false

services.claude-sandbox.container.extraModules

Extra NixOS modules for the container.

- Type:

list of anything - Default:

[]

services.claude-sandbox.vm.enable

Install the QEMU VM sandbox.

- Type:

bool - Default:

false

services.claude-sandbox.vm.extraModules

Extra NixOS modules for the VM.

- Type:

list of anything - Default:

[]

Implied configuration

When enabled, the module also sets:

nixpkgs.config.allowUnfree = true— may be required depending on claude-code sourcesecurity.unprivilegedUsernsClone = true— when bubblewrap is enabled (required for user namespaces)

Example with customization

services.claude-sandbox = {

enable = true;

network = false; # isolate all backends

bubblewrap.extraPackages = with pkgs; [ python3 nodejs ];

container.enable = true;

container.extraModules = [{

environment.systemPackages = with pkgs; [ python3 ];

}];

vm.enable = true;

vm.extraModules = [{

virtualisation.memorySize = 8192;

}];

};

Manager NixOS Module

Deploy the remote sandbox manager as a systemd service.

Usage

# flake.nix

{

inputs.claude-sandbox.url = "github:jhhuh/claude-code-nix-sandbox";

outputs = { nixpkgs, claude-sandbox, ... }: {

nixosConfigurations.myhost = nixpkgs.lib.nixosSystem {

modules = [

claude-sandbox.nixosModules.manager

{

services.claude-sandbox-manager = {

enable = true;

sandboxPackages = [

claude-sandbox.packages.x86_64-linux.default

];

};

}

];

};

};

}

Options

services.claude-sandbox-manager.enable

Enable the Claude Sandbox Manager web dashboard.

- Type:

bool - Default:

false

services.claude-sandbox-manager.listenAddress

Address and port for the manager to listen on.

- Type:

str - Default:

"127.0.0.1:3000"

services.claude-sandbox-manager.stateDir

Directory for persistent state (state.json).

- Type:

str - Default:

"/var/lib/claude-manager"

services.claude-sandbox-manager.user

System user to run the manager as.

- Type:

str - Default:

"claude-manager"

services.claude-sandbox-manager.group

System group to run the manager as.

- Type:

str - Default:

"claude-manager"

services.claude-sandbox-manager.sandboxPackages

Sandbox backend packages to put on the manager’s PATH.

- Type:

list of package - Default:

[]

services.claude-sandbox-manager.containerSudoers

Add a sudoers rule allowing the manager user to run claude-sandbox-container without a password. Required if you want the manager to launch container-backend sandboxes.

- Type:

bool - Default:

false

What the module creates

- A system user and group (

claude-managerby default) - A systemd service (

claude-sandbox-manager.service) that:- Sets

MANAGER_LISTENandMANAGER_STATE_DIRenvironment variables - Puts

sandboxPackageson PATH - Manages

StateDirectoryfor persistent data - Restarts on failure (5 second delay)

- Sets

- Optionally, a sudoers rule for the container backend

Example with container support

services.claude-sandbox-manager = {

enable = true;

listenAddress = "127.0.0.1:3001";

sandboxPackages = [

claude-sandbox.packages.x86_64-linux.default

claude-sandbox.packages.x86_64-linux.container

];

containerSudoers = true;

};

Customization

All backends are callPackage-able Nix functions, so you can override their parameters directly in your flake.

Extra packages (bubblewrap)

Add packages to the sandbox PATH via extraPackages:

packages.default = pkgs.callPackage ./nix/backends/bubblewrap.nix {

extraPackages = with pkgs; [ python3 nodejs ripgrep ];

};

Extra NixOS modules (container / VM)

Add NixOS configuration to the container or VM via extraModules:

packages.container = pkgs.callPackage ./nix/backends/container.nix {

nixos = args: nixpkgs.lib.nixosSystem {

system = "x86_64-linux";

modules = args.imports;

};

extraModules = [{

environment.systemPackages = with pkgs; [ python3 nodejs ];

# Any NixOS option works here

}];

};

For the VM backend, you can also configure VM-specific options:

packages.vm = pkgs.callPackage ./nix/backends/vm.nix {

nixos = args: nixpkgs.lib.nixosSystem {

system = "x86_64-linux";

modules = args.imports;

};

extraModules = [{

virtualisation.memorySize = 8192;

virtualisation.cores = 8;

environment.systemPackages = with pkgs; [ python3 ];

}];

};

Network isolation

All backends accept network = false to disable network access:

# Bubblewrap: adds --unshare-net

packages.isolated = pkgs.callPackage ./nix/backends/bubblewrap.nix {

network = false;

};

# Container: adds --private-network

packages.container-isolated = pkgs.callPackage ./nix/backends/container.nix {

nixos = args: nixpkgs.lib.nixosSystem { ... };

network = false;

};

# VM: disables DHCP, empties vlans

packages.vm-isolated = pkgs.callPackage ./nix/backends/vm.nix {

nixos = args: nixpkgs.lib.nixosSystem { ... };

network = false;

};

Pre-built network-isolated variants are available as no-network, container-no-network, and vm-no-network packages.

Manager sandbox backends

Configure which backends the manager can use via sandboxPackages:

packages.manager = pkgs.callPackage ./nix/manager/package.nix {

sandboxPackages = [

(pkgs.callPackage ./nix/backends/bubblewrap.nix { })

(pkgs.callPackage ./nix/backends/bubblewrap.nix { network = false; })

];

};

Using as a flake input

{

inputs.claude-sandbox.url = "github:jhhuh/claude-code-nix-sandbox";

inputs.claude-code-nix.url = "github:sadjow/claude-code-nix";

outputs = { nixpkgs, claude-sandbox, claude-code-nix, ... }:

let

pkgs = import nixpkgs {

system = "x86_64-linux";

overlays = [ claude-code-nix.overlays.default ];

};

in {

# Use a backend directly (needs claude-code-nix overlay for pkgs.claude-code)

packages.x86_64-linux.my-sandbox = pkgs.callPackage

"${claude-sandbox}/nix/backends/bubblewrap.nix"

{ extraPackages = [ pkgs.python3 ]; };

# Or use the pre-built packages (overlay already applied)

packages.x86_64-linux.sandbox = claude-sandbox.packages.x86_64-linux.default;

};

}

Architecture

Directory structure

flake.nix # Entry point: packages, checks, nixosModules, devShells

nix/sandbox-spec.nix # Single source of truth for sandbox requirements

nix/chromium.nix # Chromium wrapper with extension policy

nix/backends/

bubblewrap.nix # bwrap sandbox — unprivileged, user namespaces

container.nix # systemd-nspawn container — requires root

vm.nix # QEMU VM — separate kernel, hardware virtualization

nix/modules/

sandbox.nix # NixOS module for declarative sandbox configuration

manager.nix # NixOS module for the manager systemd service

nix/manager/

package.nix # rustPlatform.buildRustPackage for the manager daemon

scripts/

claude-remote.nix # writeShellApplication CLI for remote management

manager/ # Rust/Axum web dashboard + REST API

src/

main.rs # Axum router, background tasks (monitor + screenshot)

state.rs # Sandbox/ManagerState types, JSON persistence

api.rs # Page handlers + JSON REST API

fragments.rs # htmx partial handlers for auto-refreshing

sandbox.rs # Lifecycle: Xvfb → tmux → backend → monitor

display.rs # Xvfb spawn/kill, display number allocation

session.rs # tmux create/check/kill

screenshot.rs # Xvfb capture (ImageMagick) + VM QMP screendump

metrics.rs # sysinfo metrics + Claude JSONL session parser

templates/ # askama HTML templates

static/ # Vendored htmx.min.js + style.css

tests/

manager.nix # NixOS VM integration test

Design principles

- Pure Nix — all orchestration is Nix expressions, no shell/Python wrappers for coordination

- One backend per file — each backend is a self-contained

callPackage-able function innix/backends/ - Spec-driven —

nix/sandbox-spec.nixis the single source of truth for packages, extension IDs, and /etc paths. Backends import the spec and implement HOW to deliver each requirement - Chromium from nixpkgs — always

pkgs.chromium, never a manual download. Chromium is excluded from the spec because bwrap uses achromiumSandboxwrapper while container/VM use stockchromium - Dynamic bash arrays — backends build bwrap/nspawn/QEMU argument lists conditionally using bash arrays for optional features (display, D-Bus, GPU, auth, network)

Backend pattern

Each backend follows the same structure:

- Nix function with

{ lib, pkgs, writeShellApplication, ..., network ? true, extraPackages/extraModules ? [] } - Import spec —

spec = import ../sandbox-spec.nix { inherit pkgs; }for packages and /etc paths - Build a PATH or system closure —

symlinkJoinwithspec.packages(bubblewrap) ornixosSystemwithspec.packagesinenvironment.systemPackages(container/VM) - Generate a shell script via

writeShellApplicationthat:- Parses

--shell,--gh-tokenflags and project directory argument - Conditionally builds arrays of flags for display, D-Bus, GPU, audio, auth, git, SSH, network

- Execs the sandbox runtime (

bwrap,systemd-nspawn, or QEMU VM script)

- Parses

Manager architecture

The manager daemon (manager/src/main.rs) runs three concurrent tokio tasks:

- HTTP server — Axum router with:

- HTML pages (askama templates): index, new sandbox form, sandbox detail

- JSON API: CRUD for sandboxes, screenshots, metrics

- htmx fragments: auto-refreshing partial HTML responses

- Static file serving: vendored htmx.min.js and CSS

- Liveness monitor (5s interval) — reconciles tmux sessions, marks dead sandboxes

- Screenshot loop (2s interval) — captures Xvfb displays via ImageMagick

importor QEMU QMPscreendump

State is shared via Arc<AppState> with tokio::sync::RwLock for the manager state and screenshot cache.

CLI architecture

claude-remote is a writeShellApplication that wraps ssh, curl, jq, tmux, rsync, and fswatch. Every API call is executed as ssh $HOST curl -s ... — the CLI never makes direct HTTP requests.